68 Million AI Crawler Visits: What the Data Really Says About AI Search Visibility

A new analysis of tens of millions of AI crawler visits suggests that AI search visibility starts with crawlable, useful, trusted websites. Here is what SMEs should do next.

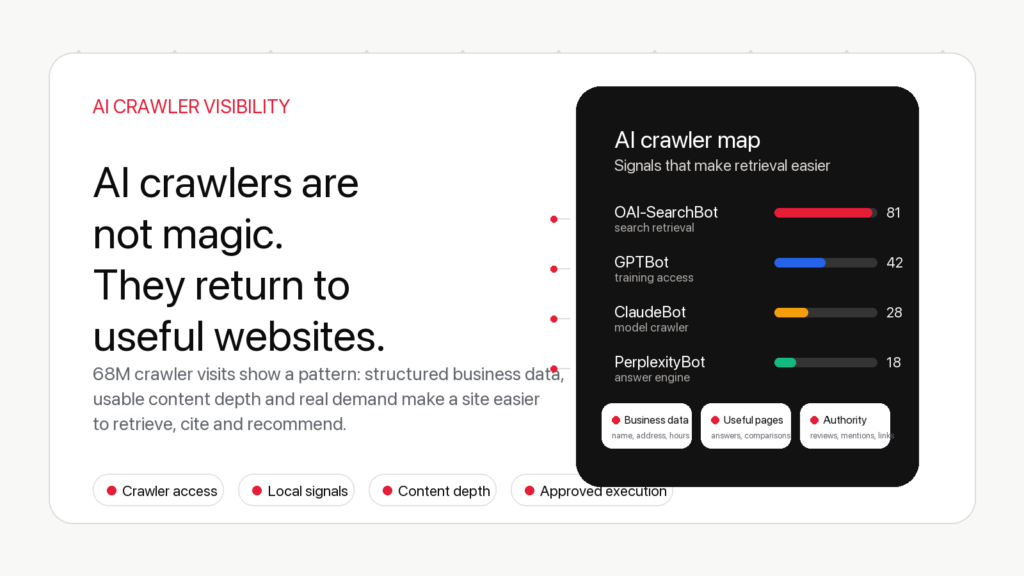

Short version: a large study covered by Search Engine Journal, based on Duda data, looked at tens of millions of AI Crawler visits across a very large website sample. The most useful takeaway is not that a single magic Ranking factor exists. It is that AI systems need websites they can Crawl, understand, trust, retrieve and cite.

For business owners, the message is simple: AI search visibility is not a separate trick. It is the result of technical accessibility, clear business information, useful content, entity consistency, authority signals and continuous execution.

What the study says

Crawler visits are not citations

What AI crawlers actually do

What SMEs should do

The AYSA view

Why this matters

AI Search is forcing marketers to look at something they used to ignore: how often non-traditional crawlers, bots and user-triggered fetchers interact with their websites. For years, most SEO reporting focused on Googlebot, Index coverage, rankings and clicks. That still matters. But AI-assisted search adds another layer: retrieval.

When a user asks an AI system for a recommendation, comparison or explanation, the system may need to retrieve pages, parse content, evaluate sources and decide what is useful enough to reference. That does not mean every crawler visit becomes a citation. It does mean websites now need to be easier to understand outside the classic blue-link SERP.

The Search Engine Journal article on 68 million AI crawler visits is interesting because it moves the conversation away from vague AI SEO theories and toward observable crawler behavior. The underlying Duda analysis looked at a very large set of websites and AI crawler activity, especially in local business contexts.

That kind of data is useful, but it must be read carefully. It can show patterns. It cannot prove that one isolated change guarantees AI visibility. In my opinion, the correct interpretation is this: AI visibility rewards the same businesses that are easiest to crawl, understand, evaluate and recommend.

From crawl access to usable answers

Weak AI visibility foundation

- Important pages hidden behind poor structure

- Thin service pages with vague claims

- Missing business details and weak entity signals

- Slow pages, messy internal links and blocked resources

Better retrieval foundation

- Clear pages that answer real user tasks

- Visible location, service, pricing and trust signals

- Structured content and consistent entity references

- Continuous monitoring and approved execution

What the study says

The SEJ coverage points to a central idea: AI crawlers are already active at significant scale. Duda’s published study describes activity across hundreds of thousands of websites and roughly 69 million AI crawler visits. The headline number matters because it confirms that AI search is not only a future interface. The crawling layer is already here.

The more useful part is not the size of the dataset alone. It is the relationship between crawler visibility and business signals. Local businesses that are easier to understand, better structured and more complete are more likely to be usable by systems that need to answer questions about services, locations and choices.

This aligns with what many SEO practitioners already see in the field. A website with a vague homepage, thin service pages, no clear location pages, weak reviews, inconsistent business information and poor internal linking is hard for humans to trust. It is also hard for retrieval systems to use confidently.

That is why the AI visibility conversation should not start with “How do I trick ChatGPT?” It should start with “Would an intelligent system understand what this business does, where it operates, who it serves, why it is credible and which page best answers the user’s question?”

Crawler visits are not citations

This is the part many businesses will misunderstand. A crawler visit is not a ranking. It is not a recommendation. It is not a guarantee that an AI assistant will mention your brand.

A crawler visit only means that a system requested something from your website. What happens after that depends on many layers: accessibility, parsing, content quality, source selection, user intent, freshness, authority, safety, product design and the retrieval model used by the platform.

For example, OpenAI’s documentation separates different crawler/user-agent purposes, including OAI-SearchBot, ChatGPT-User and GPTBot. Google also documents different crawler categories and controls, including common Google crawlers and special cases such as Google-Extended. These are not identical systems, and they do not all mean the same thing for search visibility.

That distinction matters. Some bots support search-related retrieval. Some are user-triggered. Some are used for model improvement or product features. A serious AI visibility strategy cannot treat every bot line in a log file as equal.

What a business should measure

Crawler access: Can important pages be reached and rendered without unnecessary friction?

Content usefulness: Does the page answer a specific question better than a generic directory page?

Entity clarity: Are the business name, services, locations, people, products and authority signals consistent?

Outcome tracking: Are AI referrals, brand mentions and assisted conversions monitored separately from classic organic clicks?

What AI crawlers actually do

AI crawlers and AI-related fetchers are part of a larger retrieval ecosystem. Some systems crawl the web to build or update indexes. Some fetch pages in response to a user request. Some respect robots.txt controls in specific ways. Some identify themselves clearly, while others may appear through third-party infrastructure.

For a business owner, this can feel technical and abstract. But the practical questions are simple:

- Can these systems access your important pages?

- Can they understand the page without relying on broken scripts or hidden content?

- Can they identify the business, service, market and trust signals?

- Can they distinguish the best page for a user’s question?

- Can they find supporting pages that prove topical authority?

Google’s own guidance for generative AI features keeps returning to familiar fundamentals: make content accessible, useful, satisfying, technically sound and aligned with how Google can understand the page. The AI layer does not make fundamentals disappear. It makes weak fundamentals more expensive.

What SMEs should do now

Small and mid-sized businesses do not need a complex AI SEO department to respond to this shift. They need an operating model that turns visibility requirements into ongoing execution.

Start with crawlability. Important pages should not be blocked by robots.txt by accident, hidden behind heavy JavaScript, buried too deep in the site structure or left out of internal linking. A pediatric clinic page, a local service page, a product category page or an ecommerce buying guide must be easy to find, load and parse.

Then improve entity clarity. If your business serves Bucharest, sells flowers in Bragadiru, runs a medical clinic, provides parking, offers online booking or covers emergency services, those facts should be visible in normal HTML, not only implied in images or scattered across social profiles.

Next, build useful pages. AI systems do not need another generic page that says “we offer high quality services.” They need pages that answer real questions. A page about a private pediatric clinic should explain when to choose private care, how booking works, what parents compare, where the clinic is located, what trust signals exist and what the next step should be.

Finally, monitor changes continuously. AI crawler behavior, AI referrals, branded searches, Search Console impressions, AI answer mentions and content gaps should be reviewed as part of one workflow. Otherwise the business ends up with reports, but no execution.

Where many SEO tools stop

Most tools can show crawler activity, rankings, technical errors or content gaps. That is useful, but it leaves the hard part to the user. Someone still has to decide what matters, write the update, fix the page, improve internal links, prepare schema, adjust metadata, review the risk and publish the change.

That is the reason AI visibility is not only an analytics problem. It is an execution problem. If a business owner has to read dashboards for three hours, copy tasks into a spreadsheet and wait for a developer or SEO specialist, the loop is too slow.

AI search changes quickly. A slow execution loop means the website falls behind even when the report is technically correct.

The AYSA view: visibility needs execution, not panic

AYSA’s position is straightforward: AI search visibility should be treated as a workflow, not a guessing game.

The website must be monitored. Opportunities must be detected. The work must be prepared in plain language. The business owner should approve important changes. Then accepted changes should be executed inside the website workflow.

This is especially important for SMEs and non-specialists. They do not need another dashboard full of bot names. They need to know what to do next: improve this service page, add this missing comparison, fix this crawl problem, strengthen this internal link, clarify this business entity, update this FAQ, add this schema only when it matches visible content, or build this authority signal with approval.

In my opinion, the future of SEO is not “manual SEO versus AI SEO.” It is slow reporting versus approved execution. The sites that win will not be the ones that merely watch crawler logs. They will be the ones that turn those signals into better pages, cleaner structure, stronger authority and faster implementation.

What to avoid

Do not block AI crawlers blindly because the topic sounds scary. Do not allow everything blindly either. Understand what each crawler does, what your business goals are and what your legal/privacy requirements demand.

Do not create low-quality AI pages hoping crawler volume will translate into citations. That is the fastest way to create index bloat and weak brand signals.

Do not assume that adding schema alone creates AI visibility. Structured data helps only when it describes real visible content and supports a page that is already useful.

Do not measure only clicks. AI answers may influence discovery before a click happens. Track Search Console visibility, GA4 referral changes, branded searches, assisted conversions, AI mentions and real leads together.

The practical conclusion

The 68 million AI crawler visits headline is a useful wake-up call. AI systems are reading the web at scale. But the strategic lesson is not to chase bots. The lesson is to build websites that deserve to be retrieved.

That means fast pages, accessible HTML, clear business facts, strong topic coverage, good internal linking, visible trust signals, clean technical SEO and ongoing monitoring.

For SMEs, this is good news. You do not have to become an AI search engineer. You need a system that translates these requirements into approved website work. That is where AYSA fits: less manual SEO work, more organic growth, with AI visibility handled as part of the same execution loop.

AI visibility without manual SEO chaos

Turn crawler signals into approved website improvements.

AYSA monitors your website, prepares SEO, AEO and AI visibility actions, asks for approval and executes accepted changes inside your website workflow.