Google ALDRIFT and the End of Plausible AI Answers: What It Means for SEO

Google Research’s ALDRIFT work points toward AI systems that need more than fluent answers. Here is what it means for SEO, AI visibility, source trust and approved website execution.

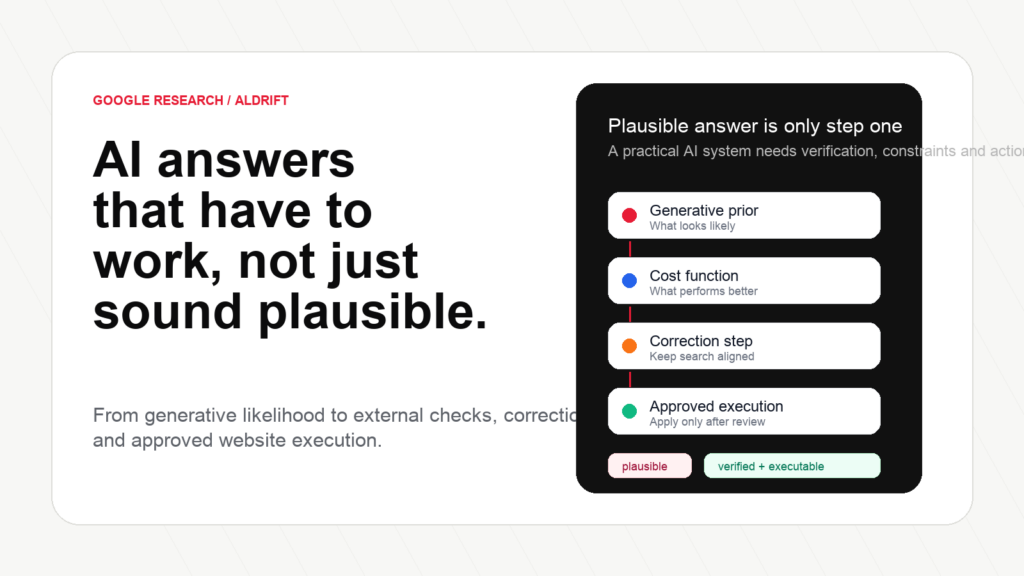

Executive summary: Search Engine Journal highlighted a Google Research paper about ALDRIFT, a research approach designed to move AI answers beyond “this sounds plausible” and toward outputs that can be evaluated against an external objective. That distinction matters for SEO because AI Search systems do not only need fluent text. They need useful, grounded, defensible answers that can survive a stronger quality check.

The practical lesson for businesses is not that ALDRIFT is currently running inside Google Search or AI Overviews. The paper is research, and we should not overclaim it. The lesson is bigger: the direction of AI search is moving toward systems that reward clarity, evidence, structure, entity consistency, source usefulness and continuous correction. Websites that still publish generic content, vague claims and disconnected pages are becoming easier to ignore.

What happened: Google Research is studying answers that can be checked

Search Engine Journal published an article about Google Research’s ALDRIFT work and framed it around a problem that has become familiar to anyone watching AI search: large language models can produce answers that are fluent, confident and plausible, while still being incomplete, weakly grounded or wrong.

That is not a small detail. In classic search, users often choose from a list of sources. In AI-assisted search, the answer interface may summarize, synthesize or recommend. If the answer layer becomes more prominent, then the difference between “sounds good” and “is actually useful” becomes commercially important for every brand, publisher and local business trying to be found.

The research paper behind the discussion is titled “Sample-Efficient Optimization over Generative Priors via Coarse Learnability”. It is a technical paper, but the business idea can be explained simply: instead of only asking a generative model to produce likely text, the system uses a way to evaluate outputs against a desired objective and improve them more efficiently.

That should sound familiar to SEO teams. A page can look like an answer and still fail the real objective. It can include the Keyword but fail the intent. It can define a topic but fail to help a buyer decide. It can mention a service but fail to prove the business can deliver it. It can be technically indexable but not useful enough to be selected, cited or recommended.

ALDRIFT is not an SEO Tool. It is not a public Google Ranking factor. It is not proof that Google Search currently uses this exact method inside AI Overviews or AI Mode. The useful takeaway is the direction: AI systems are being researched as systems that can evaluate, correct and optimize answers rather than merely generate them.

The model produces a likely answer from its generative prior. It may sound fluent before it is actually useful.

An external objective checks whether the output is closer to what the system actually wants.

The system samples, adjusts and improves instead of accepting the first plausible answer.

For websites, the same discipline becomes: prepare, review, approve and apply better answers.

What ALDRIFT means in plain English

The paper’s technical language is about optimization over generative priors and coarse learnability. For non-researchers, the phrase “generative prior” can be understood as the model’s learned sense of what kinds of outputs are likely. A language model has seen patterns in text and can generate something that looks like a plausible answer. But plausibility is not the same thing as correctness, usefulness or business value.

An “external objective” is the other side of the system. It is a way to score or evaluate whether the generated output is moving toward a desired target. In a simple analogy, imagine a designer generating many landing page layouts. Some look nice. But if the objective is conversion, readability, accessibility and trust, the best layout is not merely the prettiest. It is the one that performs against the criteria that matter.

That distinction is central to the future of AI search. A fluent answer about “best pediatric clinic in Bucharest” is easy to generate. A useful answer needs more: location relevance, service type, review signals, emergency versus routine care, insurance or private appointment context, sources, local entities, and a careful explanation of when a user should choose one option over another. The answer must help someone decide, not only fill a box with words.

The same is true for B2B and technical topics. A fluent answer about “technical SEO audit” is not enough if it only says “check crawlability, metadata and page speed.” A useful answer explains what checks matter, which issues are risky, which fixes can be automated, which require human approval, and what the business should do after the audit.

This is why ALDRIFT is interesting for marketers. It reinforces a direction that smart SEO teams should already be moving toward: answer quality is not only about wording. It is about the relationship between claim, evidence, structure, source, action and correction.

The SEO implication: pages must become easier to verify, cite and use

Traditional SEO often focused on ranking signals: keywords, links, titles, crawlability, Core Web Vitals, schema, content depth and internal links. Those still matter. But AI-assisted search adds another layer: can a system understand the page well enough to use it as part of an answer?

This is where many websites are weak. They publish pages that are technically indexable but semantically vague. They make claims without evidence. They hide important details behind thin copy. They use generic service descriptions. They fail to connect related pages. They have no clear author or business entity. They do not show real examples. They do not update old pages when the market changes.

If AI systems become better at evaluating answer usefulness, those weaknesses matter more. A page that only says “we provide high-quality services” gives an answer system very little to work with. A page that explains who the service is for, where it is available, what the process looks like, what questions customers ask, what limitations exist, what evidence supports the claim and what related pages expand the topic is much more useful.

Google’s public guidance already points in this direction. Its documentation on helpful, reliable, people-first content asks whether content provides original information, research or analysis, whether it is substantial and complete, and whether users would trust it. Google’s post on AI-generated content says the focus is quality and helpfulness, not whether a human or AI assisted the production.

In other words, the public message and the research direction are compatible: content that merely sounds plausible is not enough. The winning page has to be useful under scrutiny.

The page explains who is speaking, why they know the topic and what evidence supports the claim.

Brand, service, location, author and product facts are consistent across the website.

The page gives direct answers, comparisons, examples, FAQs and next steps.

Crawlers can find, render, index and understand the content without unnecessary friction.

The page is monitored and updated when queries, competitors or search behavior change.

AI search is not only a content game. It is an operating system problem.

When marketers hear about AI answers, many immediately ask: “How do I rank in ChatGPT?” or “How do I get cited in AI Overviews?” Those are understandable questions, but they can lead to shallow tactics. The better question is: “Is our website a useful, trustworthy source that an answer system can understand, verify and use?”

That question includes SEO, AEO, GEO, content, authority, technical health and monitoring. A website cannot become more visible in AI-assisted search only by adding a paragraph that says “we are experts.” It needs pages that answer real questions, entities that are clear, internal links that connect meaning, structured data that matches visible content, external mentions that support authority, and a refresh process that keeps information current.

Google has also been expanding AI experiences in Search. Its announcements around AI Overviews and AI Mode describe systems that help users ask more complex questions and explore information with links to the web. For website owners, the important part is that AI search still depends on source quality. If your content is not clear, useful and credible, it becomes harder to appear in any answer layer, whether the interface is classic blue links, an AI Overview, an AI Mode response, or another answer engine.

There is no responsible way to guarantee inclusion in AI Overviews or answer engines. Nobody outside the systems can promise that. But there is a responsible way to improve eligibility: make the website easier to crawl, understand, trust, cite and recommend.

That is where the ALDRIFT discussion becomes practical. The more answer systems move toward external evaluation and correction, the less patience they will have for pages that only imitate usefulness.

What websites should do now

The response should not be panic. It should be discipline. Here is the practical work I would prioritize for a serious website.

1. Build pages around specific user decisions

Do not publish a page only because a keyword exists. Define the user, the stage of the journey and the decision the page should support. A commercial page should help a buyer choose. A local page should help a user decide where to go. A technical guide should help the reader understand risks, steps and next actions.

2. Add evidence that makes the answer stronger

Useful evidence can include examples, screenshots, process details, pricing guidance, delivery limitations, customer questions, case context, author credentials, source citations, product data and comparisons. A page becomes more useful when it reduces uncertainty.

3. Make entities explicit

AI search systems need to understand who you are, what you offer, where you operate, which products or services matter, who writes the content and how topics relate. Entity consistency across About, Contact, service pages, author pages, schema, Google Business Profile and external mentions is no longer optional for serious SEO.

4. Use schema carefully

Structured data should describe visible content, not invent signals. Organization, Article, BreadcrumbList, Product, FAQ where visible, LocalBusiness where appropriate, and author data can help machines understand pages. But schema is not a replacement for useful content.

5. Strengthen internal links by meaning, not habit

Internal links should connect topics semantically. If a page mentions technical SEO, link to the technical SEO pillar. If a glossary term explains canonical tags, connect it to crawlability, duplicate content, indexability and related audit workflows. Internal linking should help both users and systems move through the knowledge graph of the site.

6. Monitor AI visibility separately from classic rankings

Classic rank tracking is still useful, but it does not fully describe AI-assisted discovery. Brands need to monitor mentions, citations, answer coverage, query changes, competitor movement, AI Overview opportunities and content gaps. The reporting layer has to evolve.

7. Turn recommendations into approved execution

This is the part most companies underestimate. A report does not fix a page. A checklist does not update schema. A spreadsheet does not build authority. The operational advantage comes from turning recommendations into approved website actions.

Plausible but weak

“A technical SEO audit checks your website for issues like page speed, metadata and crawlability.”

Problem: It sounds correct, but it does not help the user understand priority, risk, execution or what happens next.

Useful and executable

Where AYSA fits: from answer readiness to approved execution

AYSA is built around a simple belief: SEO should move from research to approved action. The AI search era makes that belief more important, not less. If answer systems require clearer sources, better structure, stronger evidence and continuous correction, companies need a workflow that can keep up.

AYSA can help identify pages that receive impressions but do not answer the query well, topics where the business lacks enough coverage to build authority, weak internal links between related pages, service pages that lack clear pricing or process information, FAQ opportunities for answer readiness, schema opportunities that match visible content, technical issues that reduce crawlability or indexability, authority-building opportunities that need review and approval, and AI visibility gaps where the brand is not easy to identify, cite or recommend.

The important part is what happens next. AYSA does not only show the issue. It prepares the work, explains why it matters, asks for approval and can execute accepted changes inside the website workflow. That is the difference between a discoverability report and a discoverability operating system.

For business owners, the benefit is practical. You do not need to become a full-time SEO specialist, read every AI search paper, or manually copy recommendations from one tool into WordPress. You need a system that monitors, prepares, explains, asks for approval and executes. That is the operational layer AYSA is building.

What ALDRIFT does not mean

It is worth being precise. ALDRIFT does not mean that traditional SEO is dead. It does not mean that links, crawlability, technical SEO or content strategy stop mattering. It does not mean that every AI-generated answer will become reliable overnight. It does not prove that Google Search uses the exact method in production.

It does mean that “good enough text” is becoming a weaker strategy. The direction is toward systems that can evaluate, compare, correct and optimize. Websites should respond by becoming more useful, better structured, more trustworthy and easier to act on.

Final take

The old content race rewarded teams that could publish quickly. The next visibility race rewards teams that can publish usefully, prove what they say, connect their knowledge, monitor change and execute improvements repeatedly.

ALDRIFT is a research signal, not a marketing shortcut. But the signal is clear: plausible AI answers are not the destination. Useful, checkable, source-worthy answers are.

Less SEO work. More organic growth.

Turn AI visibility research into approved website action.

AYSA monitors your website, prepares SEO, AEO and AI visibility improvements, asks for approval and executes accepted changes inside your website workflow.

Sources

Continue the AI search topic inside AYSA.

Use these pages to connect the article with AI SEO tools, AI visibility monitoring, AI Overviews and approved website execution.