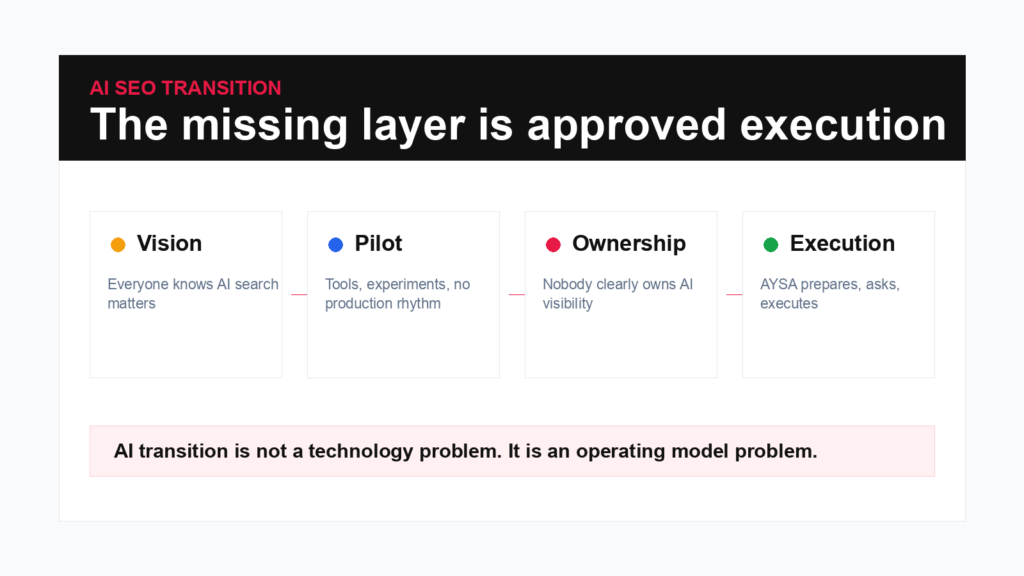

Why SEO Teams Are Stuck in the AI Transition: The Missing Layer Is Approved Execution

The AI SEO transition is not blocked by a lack of tools. It is blocked by ownership, process, measurement and execution. Here is the operating model businesses need.

Executive summary

The reason many SEO teams have not made the AI transition is not that they lack AI tools. It is that they lack an operating model. Search is changing faster than most teams can translate into production work: AI Overviews, answer engines, entity-based retrieval, Brand Mentions, citation visibility, content architecture and classic SEO all need to be managed together. The bottleneck is ownership, approval and execution.

- SEO fundamentals still matter: Crawlability, Indexability, Content quality, internal links, Structured data, authority and measurement.

- AI search adds new surfaces: AI Overviews, answer engines, citations, brand mentions, source clarity and Entity Recognition.

- Most teams are stuck in pilot mode because nobody owns the full workflow from monitoring to approved website change.

- The transition succeeds when AI visibility is treated as an execution system, not a dashboard experiment.

- AYSA is built for this gap: monitor, prepare, explain, ask for approval and execute accepted changes inside the website workflow.

A recent Search Engine Journal article made a useful point: the AI transition in SEO is not only a technology problem. Many teams have access to tools, dashboards, content generators and AI visibility trackers, yet their day-to-day SEO process still looks almost the same. The issue is not awareness. Everyone knows AI search matters. The issue is that awareness has not become a production workflow.

This distinction matters. The easiest version of the AI SEO conversation is to say “buy an AI tool.” The harder and more honest version is to ask: who owns AI visibility, what gets measured, what work gets prepared, who approves public website changes, and how do accepted changes get executed without disappearing into a spreadsheet?

The transition from classic SEO to SEO plus AI visibility is not a replacement. It is an expansion. Technical SEO still matters. Content still matters. Links still matter. Search Console still matters. But the system now also has to think about answer readiness, entity clarity, source recognition, AI citations, AI Overviews, brand mentions and content that can be understood by both search engines and generative systems.

The real problem is not AI adoption

Most serious SEO teams have already adopted AI at some level. They use it for drafts, outlines, briefs, clustering, research, metadata, technical explanations, code snippets, content QA, reporting summaries or idea generation. That is not the same as making the AI transition.

Using AI inside old workflows can make old workflows faster. It does not automatically make the business ready for AI search. If the team still measures only rankings, publishes content without entity strategy, ignores answer surfaces, lacks a process for AI visibility gaps and cannot implement approved recommendations quickly, then the transition is still unfinished.

The real transition is operational. It requires a new loop:

- Monitor classic search and AI-assisted discovery.

- Detect gaps in rankings, AI mentions, answer readiness and entity clarity.

- Prepare concrete website actions.

- Explain why each action matters.

- Ask for approval when the public website changes.

- Execute accepted changes.

- Measure what changed.

That loop is where many teams fail. They can identify the issue, but they cannot turn it into consistent execution.

SEO foundations did not disappear

One of the most dangerous ideas in AI search is that classic SEO no longer matters. That is wrong. Google’s own documentation still emphasizes helpful content, crawlability, structured data where appropriate, page experience, links and making content easy for users and search systems to understand.

AI search does not remove the need for foundations. It increases the cost of weak foundations. If a site is hard to crawl, poorly structured, thin, duplicated, slow, confusing or unsupported by trustworthy signals, it is not suddenly strong because AI search exists.

The best AI visibility work usually starts with classic SEO questions:

- Can search systems access and render the page?

- Is the page indexable and canonicalized correctly?

- Does the page answer a real query or need clearly?

- Is the content original, useful and accurate?

- Does the site show topical depth?

- Are related pages connected by internal links?

- Does the page make the brand, author, service, location or product easy to identify?

- Are structured data and visible content aligned?

AI search expands the surface, but it does not forgive weak execution.

What AI search adds to the workload

AI-assisted search adds new work because users no longer encounter information only through a ranked list of links. They may see synthesized answers, AI Overviews, AI Mode-style interactions, conversational recommendations, cited sources, social content pulled into answers, local summaries or direct answers that compress several pages into one response.

This creates additional SEO tasks:

- Answer readiness: pages need direct, extractable answers to important questions.

- Entity clarity: brands, people, products, services and locations need to be easy to identify.

- Source recognition: the website should look like a credible source, not a pile of isolated URLs.

- Topic coverage: shallow coverage makes it harder to become associated with a subject.

- AI visibility monitoring: teams need to know whether the brand appears, is cited or is absent in important AI answers.

- Content refresh velocity: outdated content becomes a larger risk when AI systems synthesize answers from current sources.

- Approval workflows: AI-suggested changes still need governance before public publishing.

This is why the transition is hard. It is not one new task. It is a new layer across all existing tasks.

Why SEO teams stall

There are five common reasons teams get stuck.

1. Pilot mode becomes permanent

The team tests AI tools, creates demos, builds a few prompts and maybe produces a report. But the work never becomes part of the weekly operating cadence. There is no owner, no threshold for action and no connection to publishing.

2. Measurement is unclear

Classic SEO has familiar metrics: rankings, clicks, impressions, CTR, conversions and revenue. AI visibility is less mature. Teams may track citations, mentions, answer presence, source frequency or share of voice, but they do not always know how those metrics connect to business outcomes.

3. Ownership is fragmented

AI search touches SEO, content, PR, product marketing, analytics, brand, engineering and legal. When ownership is spread across departments, nobody owns execution end to end.

4. Content teams are already overloaded

AI search asks for better content, not just more content. That means refreshes, entity pages, FAQ improvements, internal links, structured data, author pages, comparison pages and source clarity. Teams already struggling with classic SEO cannot absorb the extra layer without a better workflow.

5. Recommendations do not become changes

This is the biggest failure. The team knows what to do, but the work sits in a backlog. Developers are busy. Editors need context. Business owners delay decisions. Agencies send reports. Tools show alerts. The website does not change.

The last point is where AYSA’s positioning becomes obvious: the missing layer is approved execution.

Why smaller businesses cannot copy enterprise playbooks

Enterprise teams can assign AI visibility owners, run workshops, build dashboards, create cross-functional scorecards and hire specialists. Smaller businesses, ecommerce stores, local businesses, clinics, publishers and agencies often cannot copy that model. They do not have the headcount.

That does not mean they can ignore the transition. It means the transition has to be compressed into a simpler workflow. A business owner does not want to manage ten SEO dashboards. A marketer does not want to manually copy AI suggestions into WordPress. A publisher does not want another reporting layer that creates work but does not publish anything.

The practical model for smaller teams is:

- use automation to monitor and prepare the work;

- keep human approval for important public changes;

- execute accepted actions directly inside the website workflow;

- measure outcomes without requiring a full SEO department.

In other words: the business does not need to become an AI SEO lab. It needs an AI SEO operating system.

The operating model that works

A serious AI SEO operating model has four layers.

Layer 1: Foundations

Technical SEO, crawlability, indexability, content quality, internal links, schema, speed, authority and Search Console data remain the base. If these are weak, AI visibility work becomes fragile.

Layer 2: AI visibility

The team monitors answer engines, AI Overviews, AI search mentions, brand presence, competitor citations, entity gaps and answer-readiness opportunities. This layer asks: where should we be visible but are not?

Layer 3: Approval

Not every recommendation should be published automatically. Some changes affect brand, legal, medical, financial or commercial claims. The workflow should explain the action and ask for approval before execution.

Layer 4: Execution

Accepted changes should become website updates: titles, descriptions, content sections, FAQs, schema, internal links, technical fixes, redirects, content plans, authority opportunities and monitoring tasks.

When these layers are disconnected, the team gets reports. When they are connected, the team gets progress.

Where AYSA fits

AYSA is designed around the idea that SEO and AI visibility should move from research to approved action. The product is not only a report generator. It is an execution agent connected to the website workflow.

In the AI transition context, AYSA can help a business:

- monitor classic SEO and AI visibility opportunities;

- identify pages that do not answer important queries clearly;

- detect missing topics and weak topical authority;

- prepare SEO/AEO/GEO-friendly content improvements;

- suggest internal links and schema opportunities;

- review technical SEO blockers that reduce crawlability or indexability;

- surface authority-building opportunities that need approval;

- turn accepted recommendations into website execution.

The key is not blind autopilot. The key is autonomous preparation and execution after approval. The user should not need to spend hours interpreting dashboards, but the user should remain in control of important publishing decisions.

This is how smaller teams can make the AI transition without behaving like an enterprise SEO department. They can let the agent do the operational heavy lifting while they approve the decisions that matter.

A 90-day transition scorecard

If a company wants to move from AI SEO experimentation to real execution, a 90-day scorecard is more useful than a vague strategy deck.

Days 1-30: baseline the system

- Connect Search Console, analytics and website crawl data.

- Identify technical blockers, duplicate content, missing metadata and weak internal links.

- Audit entity clarity: brand, founder, authors, products, services and locations.

- Review AI visibility for priority topics and competitors.

- Create a first list of approval-ready actions.

Days 31-60: prepare the work

- Refresh pages with impressions but weak answers.

- Add answer-ready sections where users need direct clarity.

- Improve internal links across topic clusters.

- Prepare schema opportunities that match visible content.

- Create missing pages for important topics.

- Approve and execute the first batch of changes.

Days 61-90: operate continuously

- Monitor rankings, clicks, CTR, AI mentions and competitor movement.

- Repeat research and content planning monthly.

- Turn new findings into approval batches.

- Measure which approved changes improved visibility or conversions.

- Refine the workflow so execution becomes routine.

The scorecard should not ask “did we use AI?” It should ask “did AI help us prepare and execute better search work?”

FAQ

Is AI SEO replacing traditional SEO?

No. AI SEO expands traditional SEO. Crawlability, content quality, internal links, structured data, authority and measurement still matter. AI search adds new visibility surfaces and new execution requirements.

Why are SEO teams slow to transition to AI search?

Many teams are slow because ownership, measurement and execution are unclear. They may test tools, but they do not have a workflow that turns AI visibility insights into approved website changes.

What should an AI SEO workflow include?

It should include monitoring, gap detection, content and technical recommendations, approval controls, website execution and measurement across both classic SEO and AI-assisted discovery.

Can small businesses make the AI SEO transition?

Yes, but they need a simpler operating model than enterprise teams. They need automation that prepares the work and asks for approval, not a large internal SEO department.

How does AYSA help?

AYSA monitors the website, prepares SEO and AI visibility actions, explains why they matter, asks for approval and executes accepted changes inside the website workflow.

The AYSA point of view

The AI transition in SEO will not be won by the team with the most dashboards. It will be won by the team that can turn search intelligence into approved website action consistently.

That is the practical future of SEO: not less strategy, not blind automation, not generic AI content, but a connected execution workflow where the agent does the operational work and the business keeps approval control.

Less SEO busywork. More organic growth.

Sources and further reading

- Search Engine Journal: The real reason your SEO team has not made the AI transition yet

- Google Search Central: SEO Starter Guide

- Google Search Central: Creating helpful, reliable, people-first content

- Google Search Central: AI features and your website

- Google Search Console Help: Performance report